Letter to the Editor: The Opinion Section has an AI Problem

In a Letter to the Editor, Max Froomkin ’28 examines patterns in The Student’s opinion section that raise questions about the growing use of AI‑generated prose.

A year ago, for April Fools’ Day, The Student announced its transition to AI-written articles because writers are “tired of the simple fact that we have to do the work.” Wouldn’t it be funny if, right around then, The Student started actually publishing AI-written articles?

I believe that it did. AI use is notoriously hard to prove, but I’ll do my best to make my case.

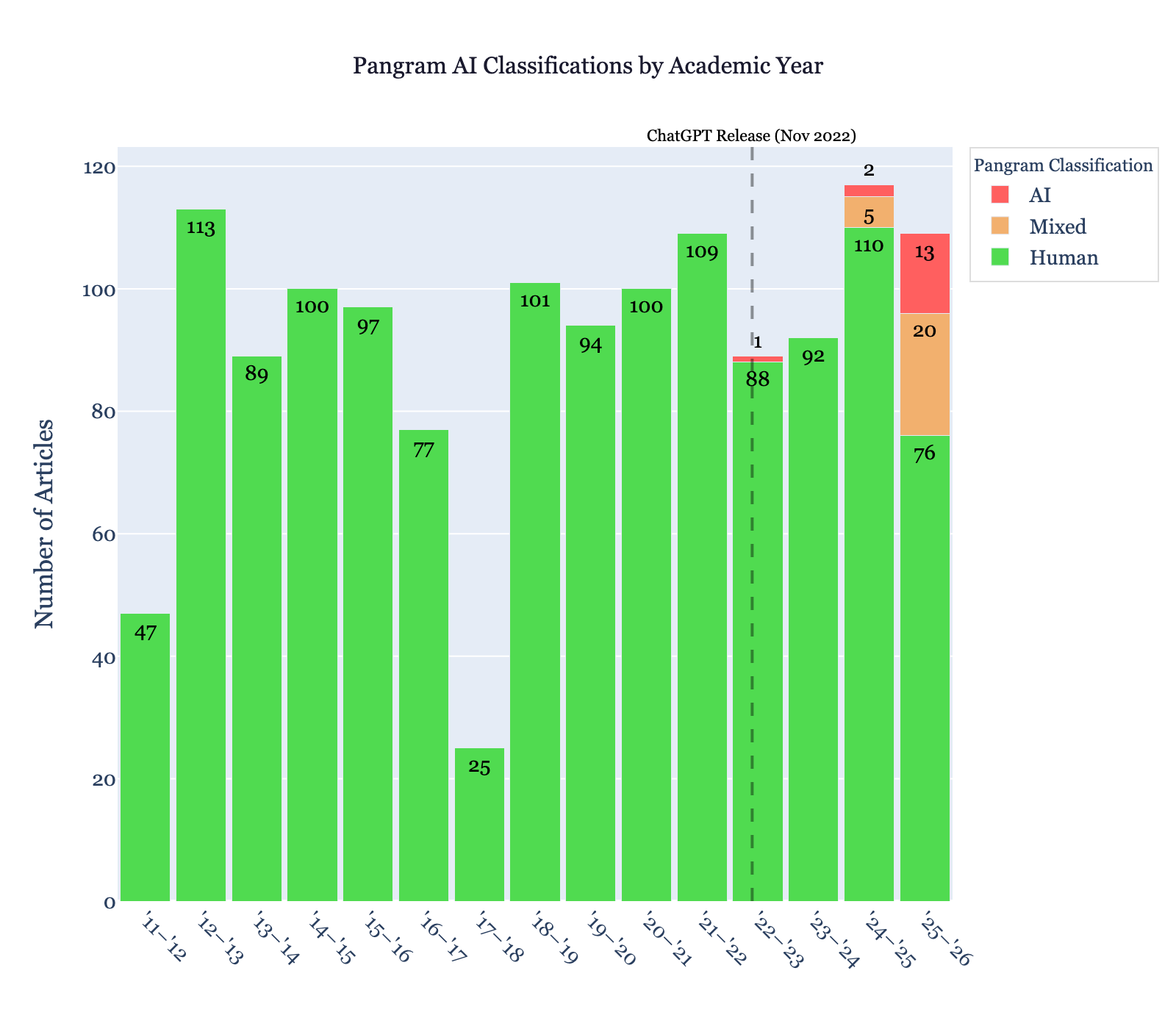

I took 1,359 Amherst Student opinion articles (I only excluded pieces with fewer than 60 words and satire) published from the start of the 2011-2012 academic year until April 8, 2026, and put them into an AI detection tool called Pangram, whose results have been cited in the Atlantic. Pangram classified each article as being written by a human, a language model, or a mix of both.

What I Found

Until the end of 2022, when ChatGPT was introduced, Pangram didn’t mark a single one of 997 articles as containing AI-generated prose. Pangram clearly avoids false positives on Amherst opinion pieces well. (A University of Chicago research team also found Pangram to misrepresent well under 0.5% of human-written text as AI-generated.)

The first article Pangram flagged was published six months after ChatGPT was released. But AI use in The Student didn’t really ramp up until this year, when Pangram marked over 30% of opinion articles as containing AI. 13 were marked as fully AI-written.

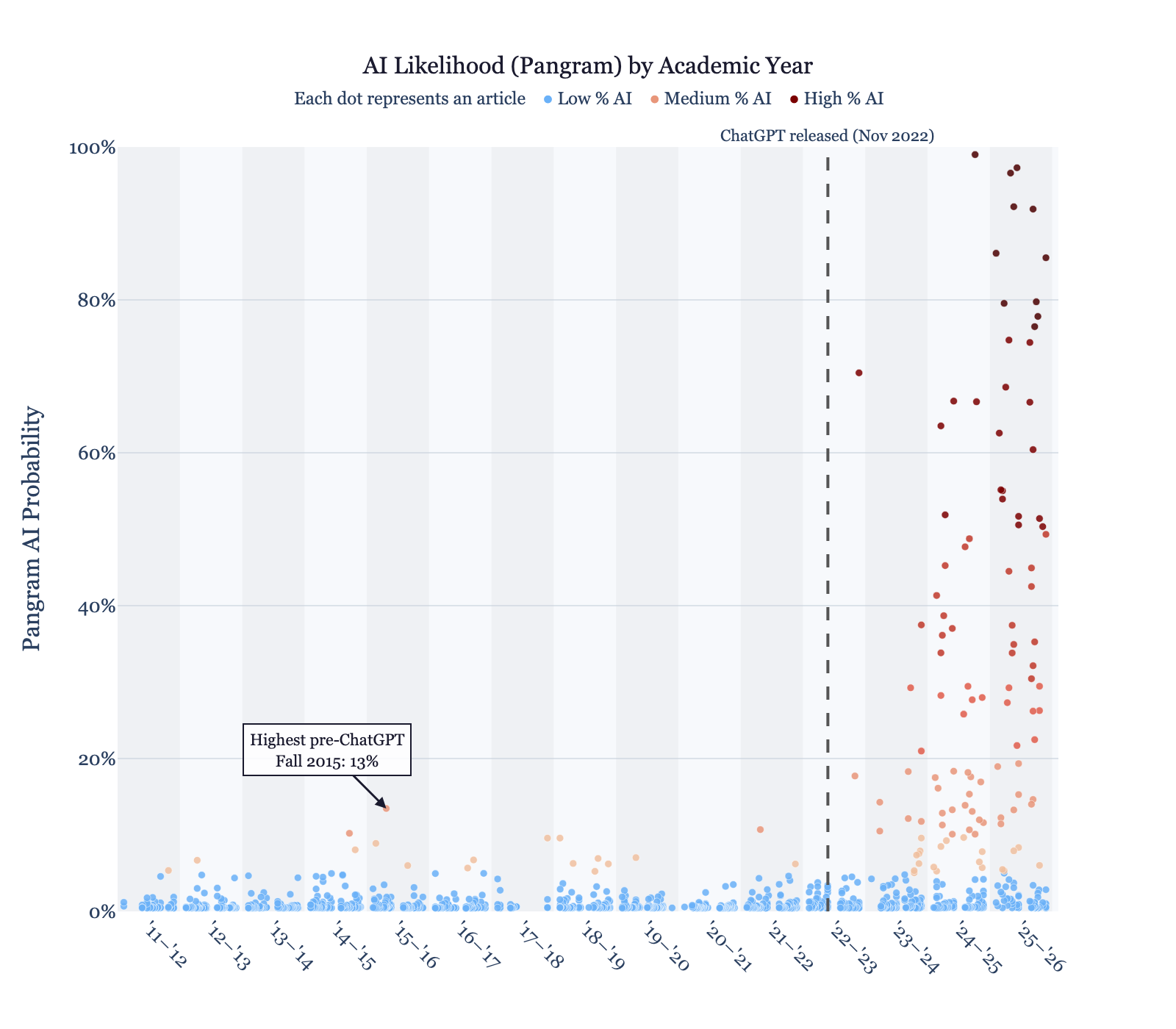

In addition to just a classification, Pangram estimates the likelihood that AI prose was used in each article (from 0% to 100%). This overall likelihood is just one factor Pangram takes into account in making its classifications.

The plot below is especially illustrative. Each dot is an article, and its height is that likelihood.

Notice that only three of 997 articles before ChatGPT’s release were assigned a probability over 10% of containing AI-generated prose — this is in line with a working paper’s estimate that Pangram’s model, with a 10% likelihood threshold, has a ~0.1% false positive rate.

Keeping with this trend, we’d expect at most one or two opinion pieces since ChatGPT’s release to cross that threshold. Instead, there were 93 (25.7% of all opinion pieces since).

I’m mindful not to name names; as James Taranto warns in The Wall Street Journal, “to accuse [writers] of passing AI-generated work as their own is potentially defamatory.” But even those critical of using Pangram to accuse specific writers admit that “Pangram’s aggregate findings are credible.” That is, if Pangram says AI use is increasingly prevalent across the opinion section, it probably is.

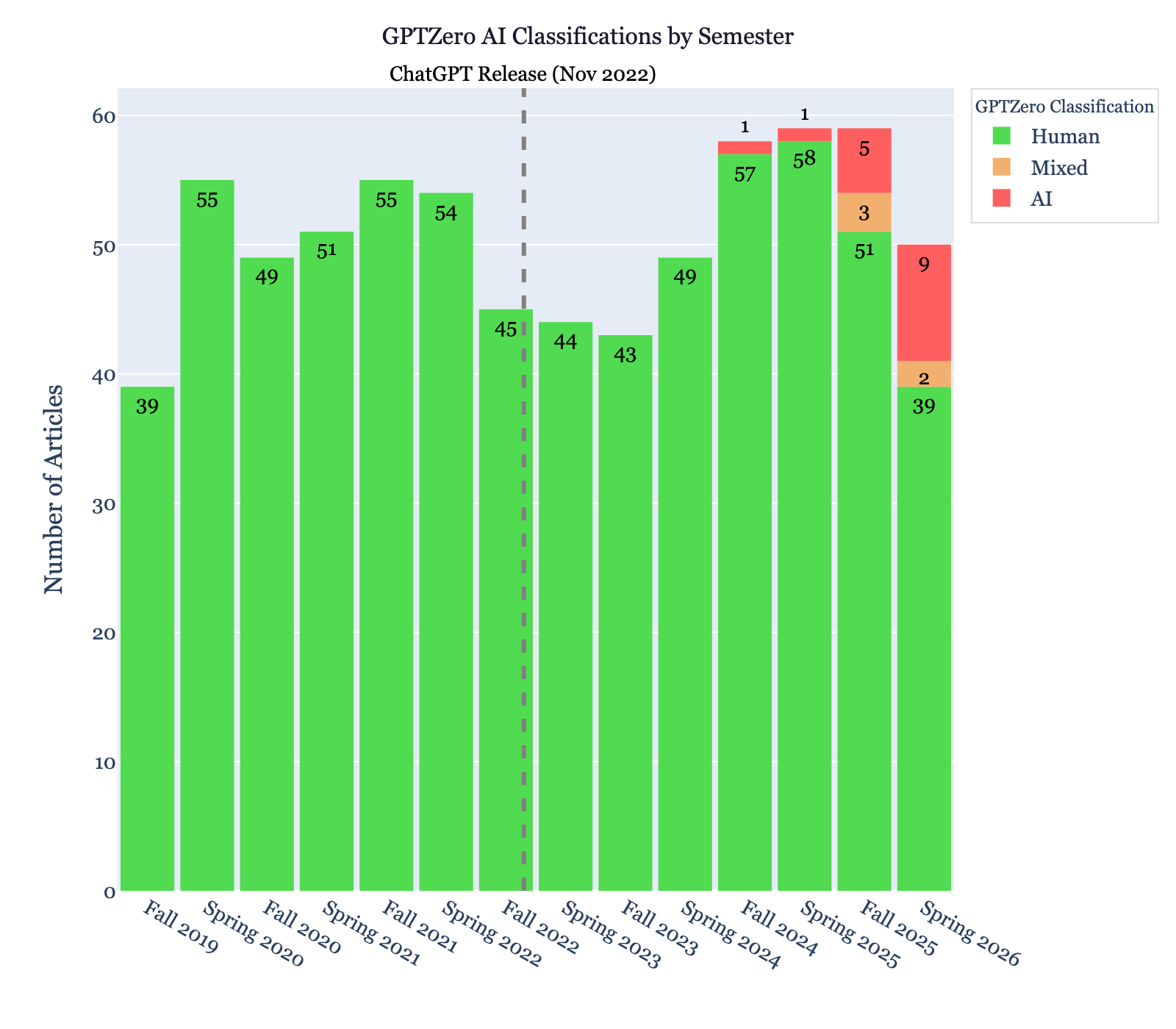

Still, for a second opinion, I checked results with another tool called GPTZero, which Business Insider considers the best free AI-detection software (albeit often too conservative). For GPTZero, I only went back to fall 2019.

I found the same pattern. Not a single false positive before ChatGPT was released, and very little flagging until this year. For 2025-2026, though, GPTZero classifies 17% of articles as containing AI-generated prose.

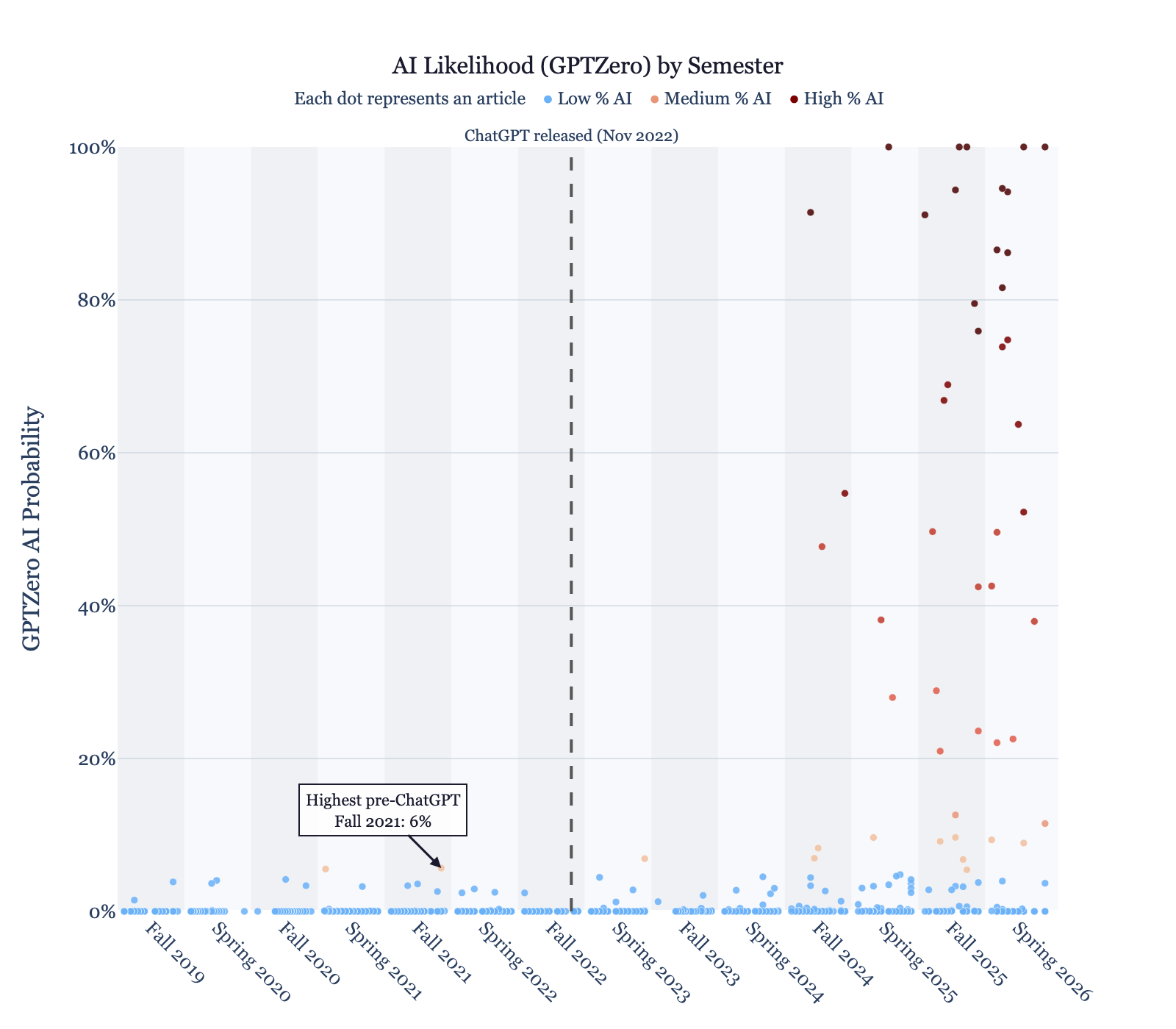

GPTZero also provides a likelihood each article was human-written, completely AI-written, or a mix of both. I combined the probabilities for completely AI-written and mixed in this plot, so that it represents the total probability each article contains AI prose.

(Similar analyses on the news and features sections gave more inspiring results. According to Pangram, not one of 373 news articles written since ChatGPT’s release likely contained AI. Yay, news! 11 of 355 features articles likely did—a modest number in comparison to opinion, but still not ideal. This seems to be a mostly opinion-specific phenomenon.)

This is Bad

I don’t want to suggest that the authors of these articles are completely uninvolved in their writing. I’m, in fact, still very hopeful their pieces are based on their thoughts. Both detectors operate at a linguistic level; that is, they detect if the prose itself is AI generated, which would also flag articles where language models “merely” rewrote writers’ ideas.

Still, the data shows many authors are letting AI do too much. Even if the articles are based on writers’ thoughts, opinion writers should articulate their ideas themselves.

In 2023, writing on the use of AI at Amherst, the Editorial Board said, “The value in human writing is not only the capacity for creativity and originality but the time and effort put in: the hours spent struggling with the material, discussing ideas with others, and synthesizing those ideas to form an argument.”

The Student should be a place for that kind of human writing. It should not be a place for opinions nobody can bother to express.

Comments ()